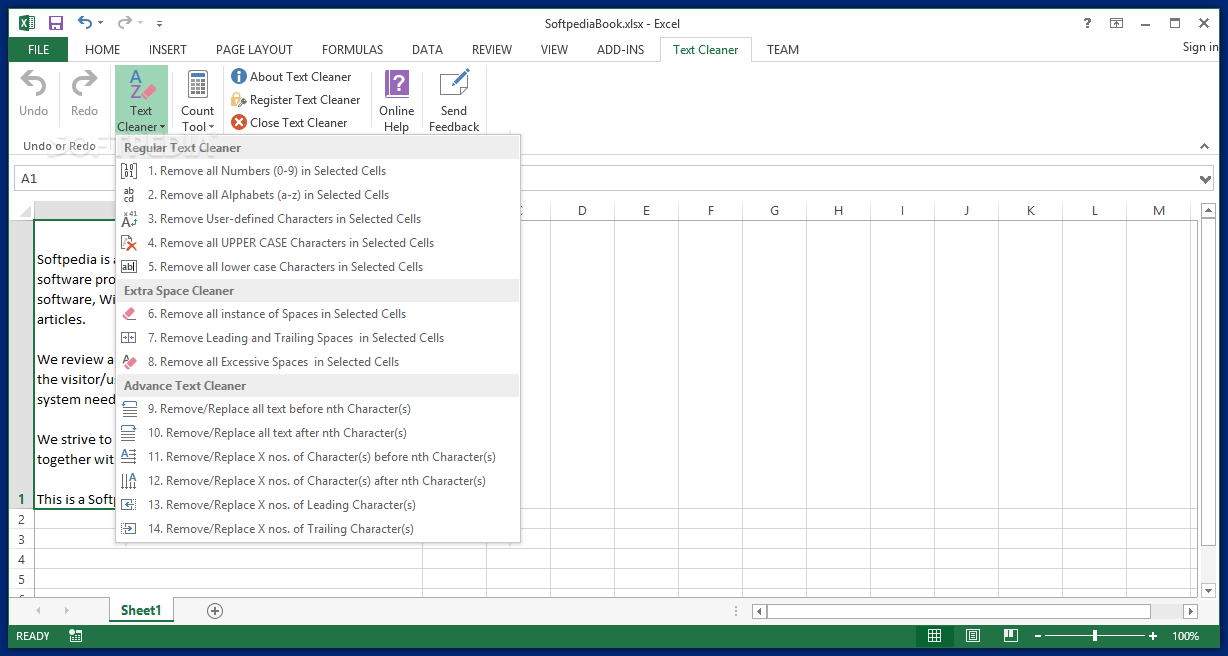

Where you take the frequency counts of the (cleaned) words that belong to a sentiment language (the numerator).Īnd you divide that by the total number of (cleaned) words in that document (the denominator). Sentiment using a proportional counts approach can be estimated as… Why is removing non-alphabetic characters important?īecause if we don’t do this, then we can essentially end up underestimating sentiment, for example.įor instance, one of the ways to estimate sentiment is to use a “proportional counts approach”. So we remove literally anything that is not a word. The 3 step process on how to clean text data starts with removing all the numbers, symbols, and anything that’s not an alphabetic character from the text. How to clean text data using the 3 Step Process Step 1: Remove numbers, symbols, and other unwanted characters Of course, you can also continue to read about the whole process further below. And we do actually have a video that explains the full process, viewable here: Well, it’s actually a three-step process. Now we literally only have the words, without any unwanted characters. You can see that all of the symbols have gone. And each element inside the list is a “token” (essentially a word).

This state – of transforming text into a list of words or a “bag of words” – is also called “tokenization”. We’ve now moved away from a blob of text to something that’s relatively more structured in that it is a list of words. We’re literally only really interested in the words.Īnd once you go through the cleaning process, here’s what the cleaned text would look like…

Or the symbols.Īnd nor do we care about punctuation marks. We don’t particularly care about the numbers. Ultimately, the only thing we’re really interested in is the actual words. If you’re interested in learning how to leverage the power of text data for investment analysis while working with real world data, you should definitely check out the course. This Article features concepts that are covered extensively in our course on Investment Analysis with Natural Language Processing (NLP). Related Course: Investment Analysis with Natural Language Processing (NLP) There’s a variety of different special characters in here. There’s a variety of different words.Īnd a lot of it is not something we can actually use. You’ve got some numbers, dates, parentheses, percentage signs, etc. So you’ve got some words. And then you’ve got your punctuation marks. This is actually an excerpt from a management discussion and analysis or MD&A filing.Īnd you can see that this looks like any ordinary blob of financial text. So right here, you’ve got just a bunch of text… To help you understand what this looks like, let’s actually take a look at what a blob of text looks like. And then see what the cleaned text looks like. But the idea is to move away from a blob of text to a format that’s a little more structured. Text data by definition, and by construction is unstructured. Think of a column or a row in an Excel spreadsheet, or in a pandas dataframe.

Put differently, we’re going from, say, a text file or. And textual analysis is no exception.įormally, text cleaning essentially involves vectorising text data. Ultimately, it’s just a process of transforming raw text into a format that’s suitable for textual analysis.Ĭleaning text data is imperative for any sort of textual analysis and naturally, the same applies for sentiment analysis or more broadly, text mining as well.Īnd this holds regardless of whether you’re conducting sentiment analysis (or other textual analysis) “manually”, or whether you’re using some sort of machine learning algorithms.ĭata cleansing is imperative for any sort of analysis. In this article, we’re going to learn how to clean text data.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed